Understanding the Fragmentation

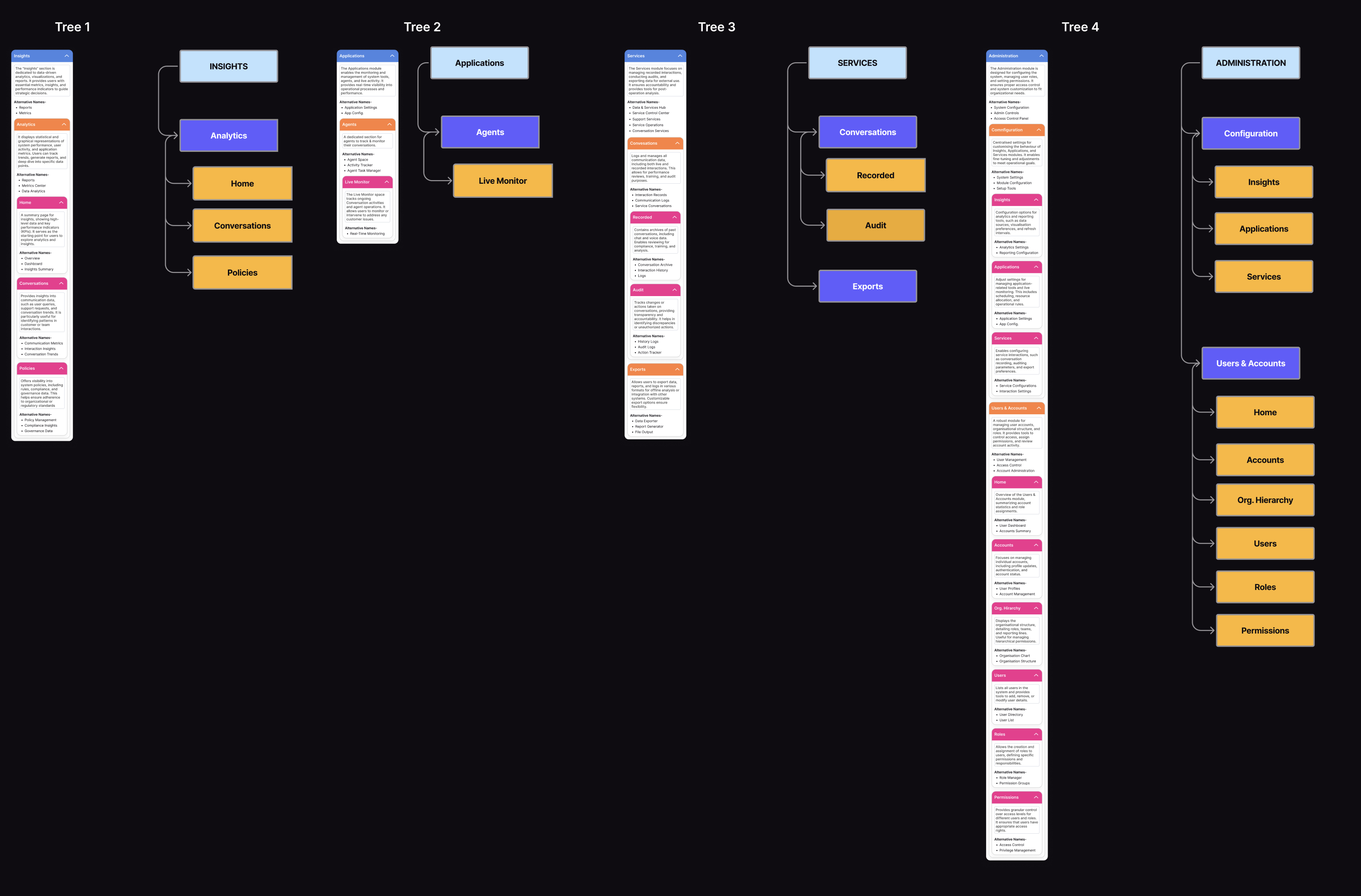

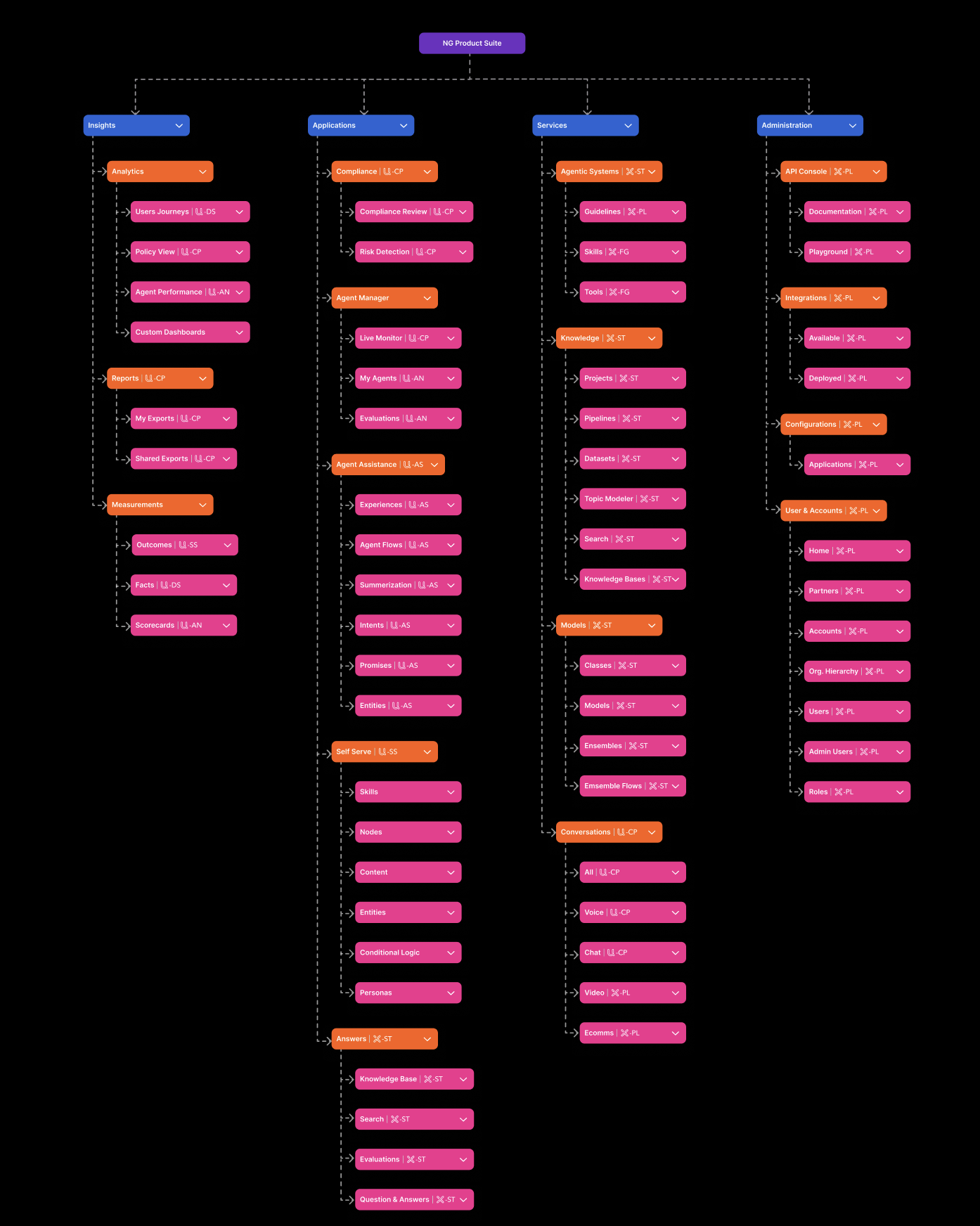

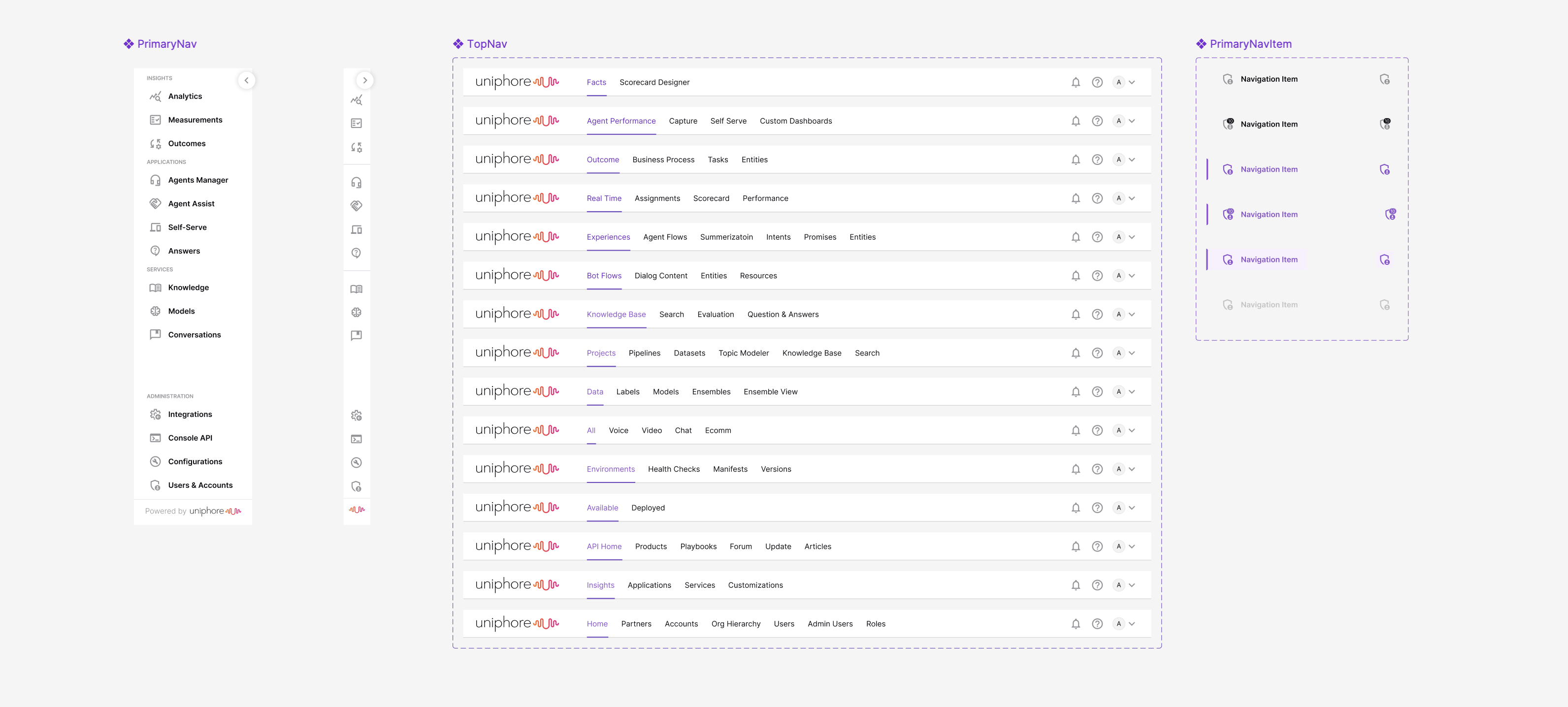

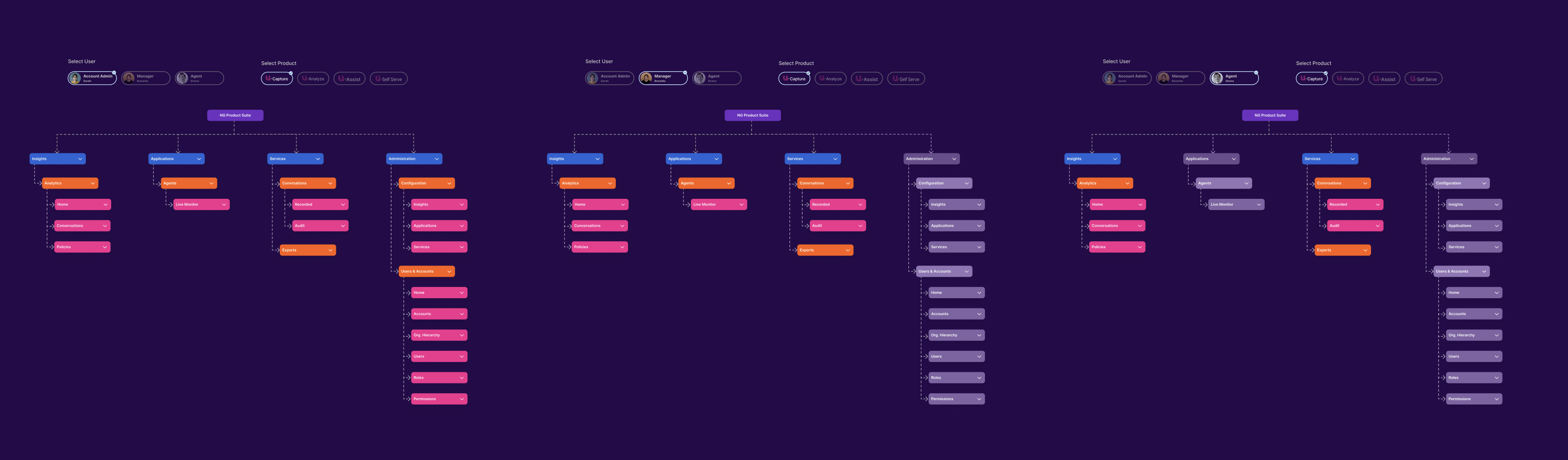

Before proposing any solutions, I needed to fully understand the scope and impact of the fragmentation. I spent three weeks conducting discovery research, which included analyzing support tickets, interviewing customers, shadowing users, and auditing each product’s information architecture.

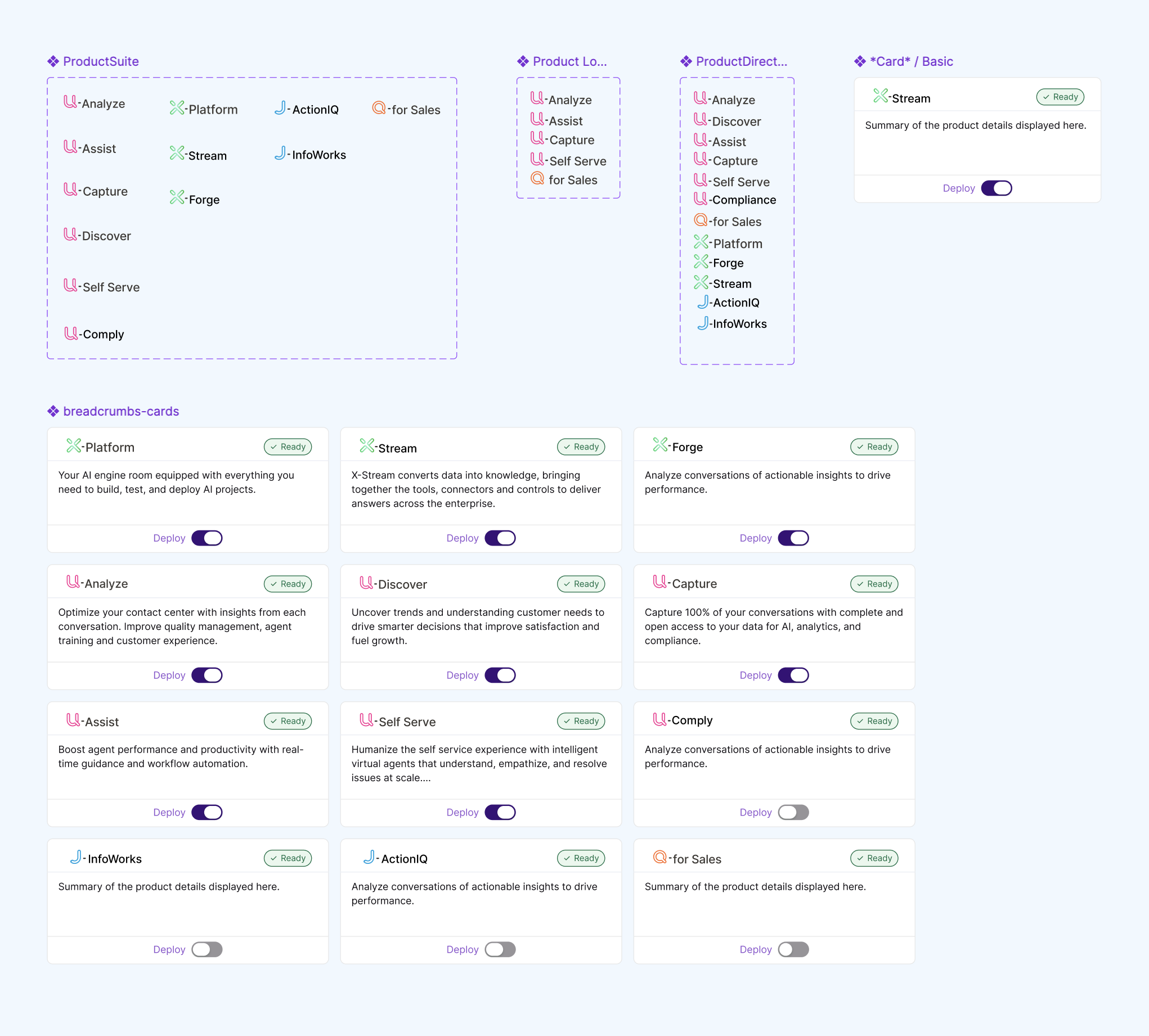

What I discovered was worse than expected. The fragmentation wasn’t just a UX inconvenience—it was actively preventing customers from getting value from our products. Many customers were only using 2–3 of our 12 products, not because they didn’t need the others, but because the effort required to learn and manage additional systems was too high.

Support ticket analysis revealed that 23% of all tickets were related to navigation confusion, permission issues across products, or questions about how features in different products related to each other.

“My team refuses to use [Product X] because it looks completely different.” — Operations Director

“I’ve given up trying to explain how all these tools fit together to new hires.” — Training Manager

Higher support costs: Navigation/access issues consumed 23% of support resources.

Longer onboarding: 6 weeks time-to-value, 2 weeks spent just learning multiple interfaces.